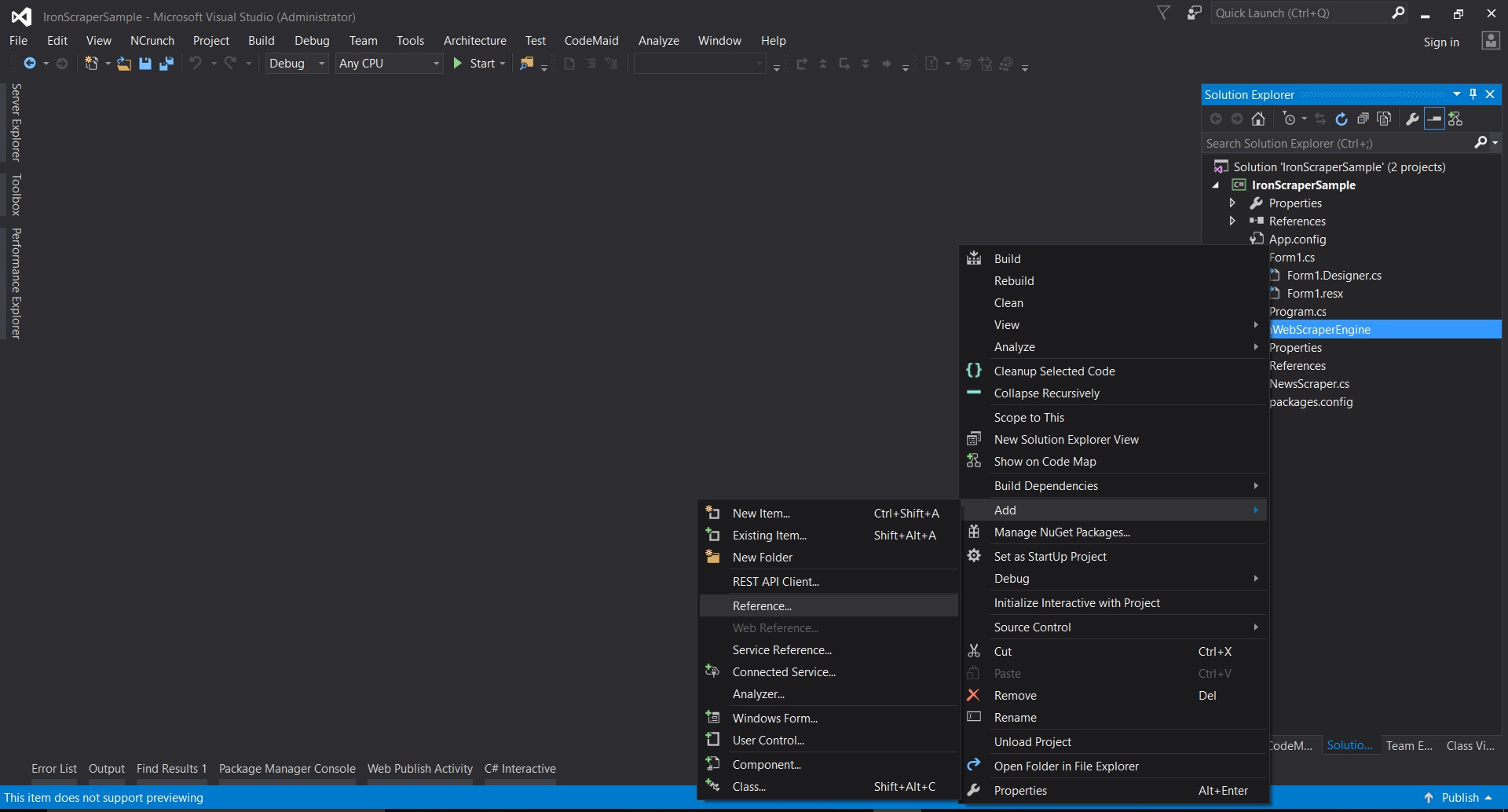

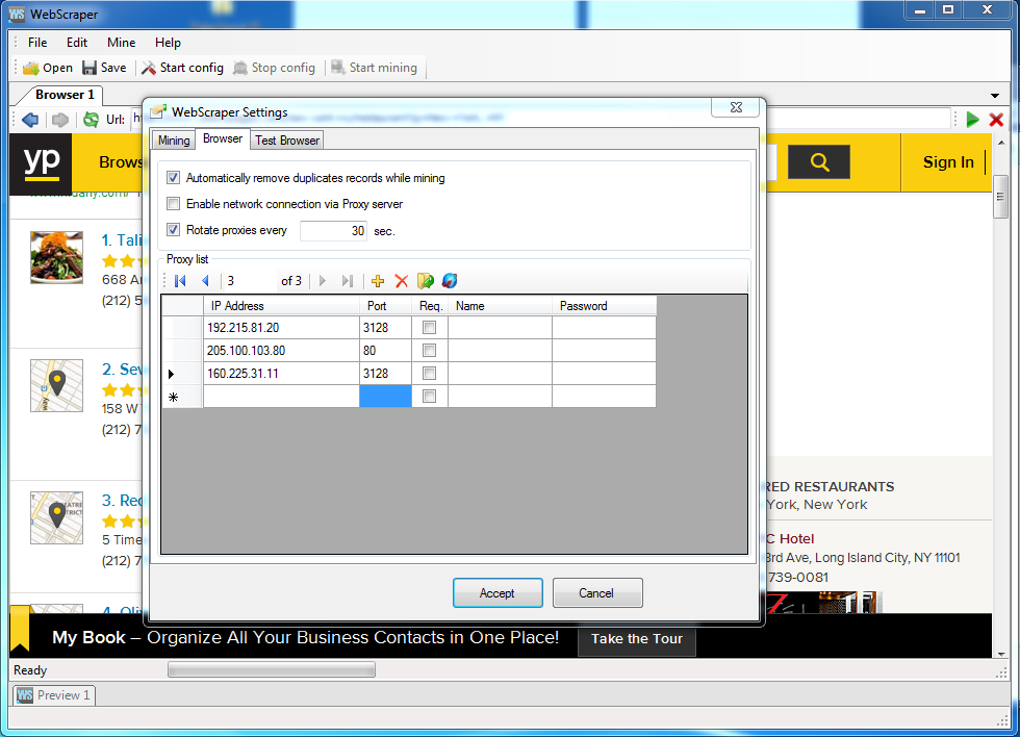

This way I don’t have to write localhost:5001/api/WebScraper in the address bar, localhost:5001/WebScraper will do. Instead of Route("api/") I’m going to specify Route(""). Lets open it up! using System using using System.Linq using using using namespace website_scraper.Controllers Īdditionally I’m going to alter the route specified in the controller. The last command creates a new controller in the “Controllers” folder called “WebScraperController”. A tool we need for code generation dotnet add package .Design // The command that generates our controller dotnet aspnet-codegenerator controller -name WebScraperController -async -api -outDir Controllers NET core has CLI tools for that (don’t need VS, I’m all for that!). This will create a new project folder and setup a basic controller.īefore dealing with AngleSharp let us first create a new controller so we can actually see something we wrote on the screen. NET we run dotnet new webapi -o web_scraper. If you don’t have any other timing mechanism you can use the CLI to generate a basic windows service which will run in the background and make requests to your scraping target. I’m going to use dotnet CLI to create a simple “webapi” which I can later put live and ping on some interval from another web app which will handle the timing. The code is going to be quite simple and you won’t need a special environment to run it, so you have many options really. You can even add controllers and models using the CLI and get the code generation utilities you would get in Visual Studio, but on the command line (meaning you could probably create integrated tools for whatever editor you’re using). Luckily we can skip it! The dotnet CLI tools are quite simple to use and well documented (□). If you’re like me, then Visual Studio gives you a migraine, so I definitely want to skip using it whenever I can. In the future I might make an Android app that will directly push the relevant data to my phone and might even sprinkle some machine learning on top (recommendations perhaps?), but that’s another article, or 100! Using. If differences exist put them into a summary and email them to myself using Mailgun.Find out the differences between newly acquired data and data stored in the database ( PostgreSql), update database accordingly.If a next page link exists go back to step one and repeat.Extract relevant information from parsed data and store it in a list.Parse the HTML acquired using AngleSharp.Given a URL, make an HTTP request and download the data.Here’s the general layout of the entire process: There will be no UI as there’s really no need for one, because I will be mailing the changes to myself. NET Core with C# (because I don’t use it very often and I feel like using it right now) for the whole thing. I am going to write a simple web API, which I can then poll on an interval from a different web app. So, I decided to solve this problem by writing a little web scraper that will routinely (every 5 minutes, good and interesting deals go fast) scrape the website, check if anything has changed and then email me the changes.

I am currently looking for a used car to buy, something fun - that I can modify - and I’m sick and tired of checking the local used car website every single day for 30 minutes to up to an hour to see if there’s anything new or if anything has changed (price has dropped let’s say).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed